EU AI Act Readiness &

Regulatory Compliance.

The next layer after AI inventory is regulatory classification. Organizations need to understand which AI systems are prohibited, high-risk, limited-risk or low-risk — and what evidence must exist for each case.

This AIGN category maps solutions that help organizations operationalize EU AI Act readiness through classification, role mapping, obligations management, documentation, workflow control, evidence reuse and audit-oriented reporting.

From legal obligation to operational evidence.

EU AI Act readiness is not a legal memo. It requires structured use-case classification, provider/deployer role analysis, obligation tracking, documentation workflows, transparency controls and defensible audit evidence.

AIGN does not rank legal claims. AIGN maps governance capabilities.

Category focus: classification, obligations, documentation, workflows, evidence.

Why regulatory readiness needs a system.

EU AI Act readiness is not achieved by policy text alone. It requires a repeatable operating model that connects AI systems, legal roles, risk categories, obligations, documentation and ownership.

Determine risk level

Classify AI systems against regulatory risk logic, including prohibited practices, high-risk use cases, limited-risk transparency duties and low-risk systems.

Define responsibility

Clarify whether the organization acts as provider, deployer, importer, distributor, product manufacturer or multiple roles at the same time.

Manage obligations

Translate obligations into tasks, workflows, evidence requests, approvals, human oversight points, transparency controls and documentation requirements.

Build defensibility

Create traceable records, decisions, documentation, audit trails and compliance evidence that can support management, audit and regulatory review.

Selected EU AI Act readiness examples mapped by AIGN.

The following examples illustrate how AI Governance providers support organizations in translating EU AI Act obligations into structured workflows, documentation and evidence.

Saidot

Saidot is positioned as an EU-native AI Governance platform with strong relevance for EU AI Act readiness, governance workflows, evidence reuse, risk visibility and compliance-oriented system management.

- EU-native AI Governance platform positioning

- EU AI Act templates and compliance workflows

- Evidence reuse across AI systems

- Governance data connection across AI portfolios

- Relevant for enterprises and public organizations

Trustible

Trustible is positioned as a purpose-built AI Governance platform for enterprise AI intake, risk assessments, compliance frameworks and approval workflows.

- AI intake and structured use-case workflows

- Risk assessments and compliance framework alignment

- Approval and governance workflow support

- Enterprise AI adoption control

- Relevant for policy-to-process implementation

EQS AI Compliance

EQS positions its AI compliance solution as a platform for EU AI Act compliance, risk classification, audit automation and trustworthy AI governance structures.

- EU AI Act compliance platform positioning

- Centralized AI risk classification

- Audit automation and documentation support

- Trustworthy AI governance workflows

- Relevant for compliance and privacy-led organizations

What organizations need from this category.

AIGN evaluates EU AI Act readiness solutions according to whether they help organizations turn legal obligations into operational governance.

Obligation translation

Strong solutions help translate abstract legal requirements into concrete tasks, workflows, documents, approvals and control responsibilities.

Evidence generation

Readiness is not a statement. It requires records, classifications, documentation, decisions, logs and traceable proof of governance action.

Accountability reporting

Mature solutions support management visibility, compliance oversight, audit review and escalation where AI risk becomes material.

How this category supports enterprise readiness.

EU AI Act readiness solutions become valuable when they connect legal interpretation with inventory, roles, obligations, documentation and defensible evidence.

Governance capabilities

- Risk classificationCore

- Provider / deployer role mappingCore

- Obligations managementHigh

- Transparency controlsHigh

- Documentation workflowsHigh

- Audit evidenceHigh

Relevant AIGN pathways

- AIGN AI Governance BriefingTrigger

- AIGN OS Governance LayerOperating Model

- ASGR Readiness AssessmentGap Analysis

- AI Governance Radar SuiteSignal Layer

- Board & Audit GovernanceEscalation

- AIGN Trust Label PathwayEvidence

The providers shown above are selected market examples for category orientation. Their inclusion does not constitute certification, legal endorsement, regulatory validation or a compliance guarantee. AIGN.global structures the AI Governance solution landscape by capability, relevance and use-case logic. Providers interested in a formal listing, extended profile or reviewed category placement may apply for inclusion in the AIGN AI Governance Solutions Directory.

Become visible in the EU AI Act readiness category.

If your platform, tool, metric or advisory solution helps organizations classify AI systems, manage obligations, document decisions or create audit-ready evidence under the EU AI Act, apply for inclusion in the AIGN AI Governance Solutions Directory.

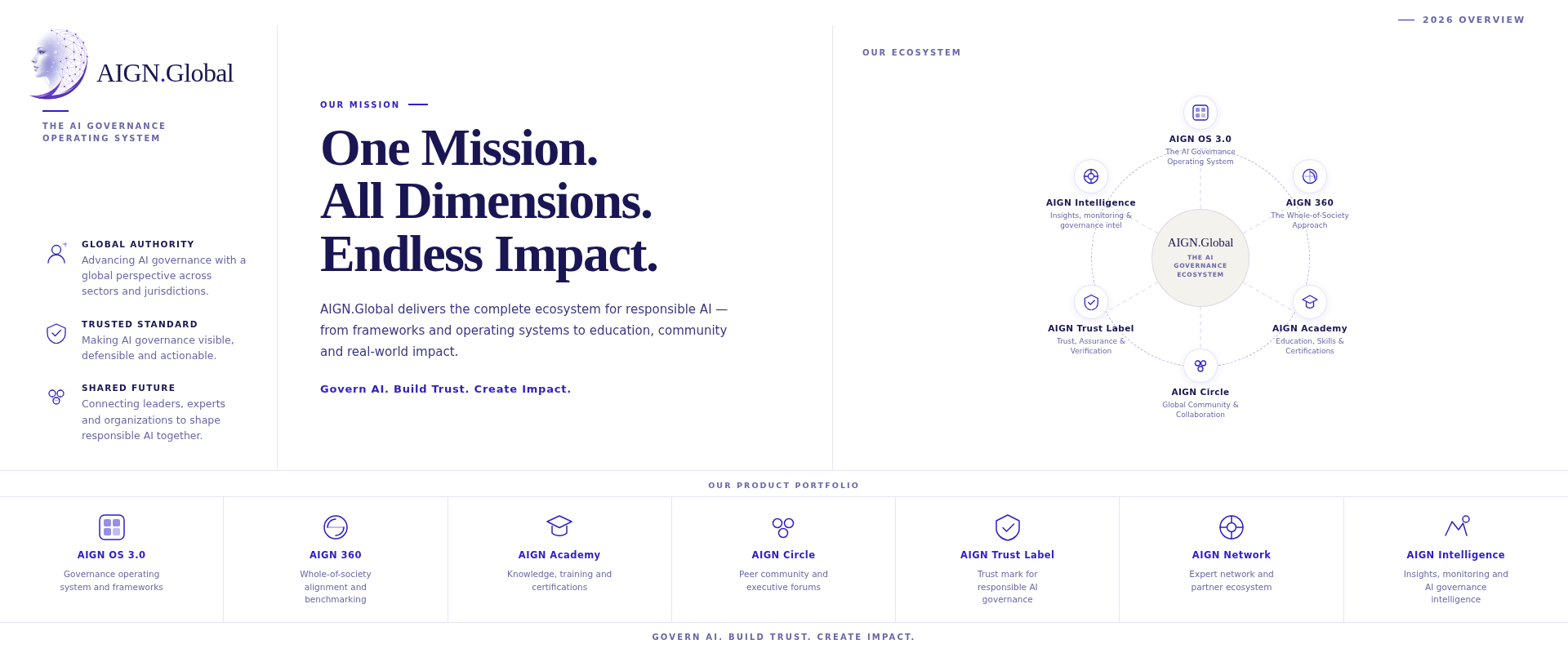

AIGN.global

The AIGN AI Governance Solutions Directory is curated by AIGN.global as part of its global AI Governance infrastructure.

upmann@now.digital