Serious AI Governance

needs serious challenge.

As an AI Governance professional — whether you are an interim manager, consultant, internal lead or executive owner — you often operate in a space where few people can truly challenge your reasoning. AIGN Sparring gives you a curated peer group that tests your assumptions before regulators, boards, clients or auditors do.

Peer-led. AIGN-structured. No lectures. No generic webinars. No passive listening. Just serious AI Governance professionals testing each other’s judgment.

Not a course. Not a webinar.

A peer circle with teeth.

AIGN Sparring is a curated, sector-specific peer circle for AI Governance professionals who need structured challenge, defensible judgment and operational clarity — without another course, webinar or generic community.

Your group is peer-led, but not unstructured. AIGN provides the sparring formats, rules of engagement and shared governance language. The value is not facilitation — it is the quality of the room.

Who challenges

your thinking

right now?

The people in this role — interim manager between mandates, consultant navigating client politics, internal AI governance lead surrounded by colleagues who do not speak the language — often have no genuine peer to test their reasoning against.

“Am I classifying this AI use case correctly under the EU AI Act, or am I missing something that will become visible in an audit?”

“My board wants a governance framework by next quarter. Is my approach actually defensible — or just good-looking?”

“Everyone in my organisation nods along. Who is in a position to tell me when my reasoning is wrong?”

“DORA has applied since January 2025. ISO 42001 is due. The Digital Omnibus is reshaping everything. How do I keep up — alone?”

Find your industry.

Find your sparring partner.

AI Governance in a bank is not the same as in a hospital. Each group is built around one industry — same regulatory pressure, same operational reality. Select your sector to see the current sparring topics.

“DORA has applied since 17 January 2025. Where exactly does AI governance end and ICT risk management begin — and who owns the overlap in practice?”

BaFin now explicitly classifies AI as ICT risk under DORA. The question is no longer whether digital operational resilience matters — but how AI-related ICT risks are governed in practice.

“Our credit scoring model is likely high-risk under the AI Act. Under the current timeline, many high-risk obligations apply from 2 August 2026 — are we building the right foundations now?”

Even if political simplification or deferral discussions continue, organisations cannot wait to build documentation, oversight and auditability. The preparation window is now.

“How do I govern an AI vendor whose subcontractors I have never seen — and still satisfy DORA’s third-party requirements?”

Third-party risk chains in AI procurement are opaque. DORA requires full oversight down the chain — a challenge most governance teams have not yet structurally resolved.

“Our diagnostic AI tool sits between MDR and the EU AI Act. Which framework governs — and what if the requirements conflict?”

Medical device regulation and the AI Act overlap for clinical AI tools. The interplay between CE marking and AI Act Article 6 obligations remains genuinely unresolved for many organisations.

“Who holds clinical accountability when an AI recommendation contributes to a wrong treatment decision?”

Human oversight requirements under the AI Act meet medical liability law. The accountability gap between clinician, developer and deployer is widening as AI moves deeper into clinical workflows.

“How do we govern AI in clinical trials without slowing down timelines that cost months and millions?”

AI in drug development is under increasing regulatory scrutiny. EMA guidance is evolving and most governance frameworks have not yet caught up with the pace of adoption in research environments.

“We use AI in benefit eligibility decisions. The AI Act classifies this as high-risk. How do we govern something this politically sensitive?”

Annex III of the AI Act explicitly covers AI in public services affecting citizens. Democratic accountability requirements go significantly beyond technical compliance.

“We procured an AI system from a vendor who claims compliance. How do we verify it — without internal technical capacity?”

Most public sector bodies currently rely on vendor self-declarations. Third-party audit requirements under the AI Act are still being operationalised across member states.

“A journalist asks us to explain an AI decision that affected thousands of citizens. What does meaningful transparency look like in practice?”

Transparency obligations in government AI are both a legal and democratic challenge. The explainability tools available rarely match the political and public accountability reality.

“We build on top of a foundation model. Does that make us a provider, a deployer — or both? Getting the role allocation wrong can create serious compliance, contractual and liability exposure.”

The provider/deployer distinction in the AI Act is one of the most contested interpretations currently. Under the AI Act, fines can reach up to 7% of global annual turnover for the most severe infringements.

“Our agentic AI system takes actions autonomously across multiple tools and systems. What does governance look like for something that was not designed with a human in the loop?”

Agentic AI sits in a regulatory grey zone. Cascade failures and emergent misalignment require governance approaches that most frameworks have not yet structurally addressed.

“We ship AI features continuously across multiple EU countries. How do we build governance that keeps pace without becoming a release bottleneck?”

Product-embedded AI governance is architecturally different from traditional compliance. Most frameworks were not designed for continuous deployment cycles at commercial speed.

“Our actuarial models are now AI-driven. How do we govern them under the AI Act without undermining the business logic they were built on?”

Actuarial AI used in pricing and risk selection likely qualifies as high-risk. The tension between model confidentiality and explainability requirements is structurally acute.

“A customer was denied coverage by an AI underwriting system. How do we explain that decision in a way that is both compliant and genuinely understandable?”

Explainability in insurance AI is both a regulatory and reputational obligation. Most available tools produce outputs that regulators and customers cannot practically use.

“DORA has applied since January 2025 and our AI systems touch ICT resilience. Are we managing both regulatory frameworks together — or creating gaps by treating them separately?”

Insurance sits at the intersection of DORA and the AI Act in ways the regulation did not fully anticipate. The compliance burden is compounding with no proportional increase in team capacity.

“Our predictive maintenance AI contributed to a production failure. Who is liable — and what does that mean for how we document AI decisions going forward?”

EU product liability reform now extends to AI-caused harm. The allocation of responsibility across the AI supply chain remains legally unsettled in many cross-border manufacturing contexts.

“We embed AI into machinery that directly affects worker safety. The AI Act classifies this as high-risk. What does a credible implementation actually require?”

Safety-critical AI in manufacturing triggers the most demanding AI Act requirements — including risk management, technical documentation, human oversight and incident reporting obligations.

“Our OT and IT environments do not share a governance language. How do we build AI oversight that functions coherently across both?”

Operational technology AI governance is a structural blind spot in most frameworks. The cultural and technical gap between OT and IT environments creates genuine and often overlooked compliance exposure.

“AI manages our grid load balancing. A failure would affect hundreds of thousands of people. What does credible governance look like for a system with this level of consequence?”

Critical infrastructure AI sits under both the AI Act and CER/NIS2 directives simultaneously. The governance requirements are the most demanding across any sector and are still being operationalised.

“NIS2 is now law. Our AI systems touch cybersecurity. Are we managing NIS2 and AI Act obligations coherently — or creating compliance gaps at their intersection?”

The intersection of NIS2 and the AI Act in energy creates compounding obligations. Most legal and governance teams are still handling them as separate workstreams — which creates structural risk.

“We use AI for emissions optimisation. Regulators are beginning to ask for audit trails. What does evidence-based governance for algorithmic environmental decisions look like?”

Environmental AI governance is emerging as a distinct regulatory requirement. Documentation standards and audit frameworks for this area are still being developed in real time.

“I advise clients on AI Act compliance. But my own firm uses AI tools whose governance status is unclear. What is the professional exposure?”

Legal professionals advising on AI governance face a credibility and liability question about their own AI tool usage — a challenge that bar associations and professional bodies are only beginning to address.

“A client wants me to validate their AI governance framework. I am not a technical expert. What does professional liability look like in this context?”

The market for AI governance advisory is growing faster than the professional standards that govern it. Advisors are increasingly being asked to vouch for technical realities they cannot independently verify.

“We use LLMs for legal research and drafting. How do we govern hallucination risk in a professional context where accuracy is a direct liability question?”

LLM use in legal work creates professional responsibility challenges that existing frameworks did not anticipate. Oversight, verification and documentation requirements remain structurally unresolved.

Peer-led.

AIGN-structured.

Three rotating formats. Your group decides which fits the session. You bring the problem. The group brings the pressure. AIGN brings the structure that makes it rigorous.

Hot Seat

One member brings a live governance challenge. The others challenge every assumption — as regulator, auditor, board member, journalist. 60 minutes of structured pressure. 30 minutes of synthesis. You leave with clarity, not reassurance.

Red Team

One member defends their governance strategy. The rest systematically try to break it. Stress-test your reasoning before the real audit does. Better to find the gaps here than in front of a regulator or a board.

Regulation Radar

Each member brings one regulatory development the others have not yet seen. Collective verdict: material or noise? What does it require in practice? Not a news digest — a collective judgment exercise.

Four steps.

One commitment.

You express interest

The application form has been removed from this page. Interested professionals can contact AIGN directly by email and briefly describe their role, sector and current AI Governance challenge.

Patrick curates your group

Every serious request is reviewed personally by Patrick Upmann, founder of AIGN. Groups are built for productive tension — diverse roles, same industry, no two people in identical functions or organisations.

Your group is confirmed

Once all 10 members are confirmed, you receive your first session date and group channel access. Membership runs monthly from the first session date.

Your group runs itself

No external moderator. Your group sets the topics, chooses the format, determines the pace. AIGN provides the sparring formats, rules of engagement and the shared AIGN OS governance language that makes the sessions substantive.

One price.

No tiers. No upsells.

€150

per month

Billed monthly · Cancel anytime

A serious room for

serious AI Governance judgment.

Built for professionals who do not need another webinar — but a trusted sector circle where their AI Governance decisions are challenged before they become board, audit, regulatory or client exposure.

Founding Cohort Membership

Sector-specific AI Governance Sparring

€150

per month

Billed monthly

Cancel anytime

This is not an open community. Every group is curated to create serious peer challenge, protect trust and avoid superficial discussion. When a group is full, it is closed.

- 2 structured sparring sessions per month 90 minutes of live case challenge, red-team review and sector-specific judgment testing.

- 10 members maximum Personally curated by Patrick Upmann to protect quality, trust and relevance.

- Industry-specific peer circle Same regulatory pressure, same operational reality, no cross-sector small talk.

- Bring your real AI Governance cases Classification, oversight, vendor risk, auditability, board defensibility and regulatory interpretation.

- AIGN OS as shared governance language Risk classification, accountability, oversight, evidence, auditability and defensibility.

- Private sector channel between sessions For ongoing questions, regulatory signals and peer feedback between sessions.

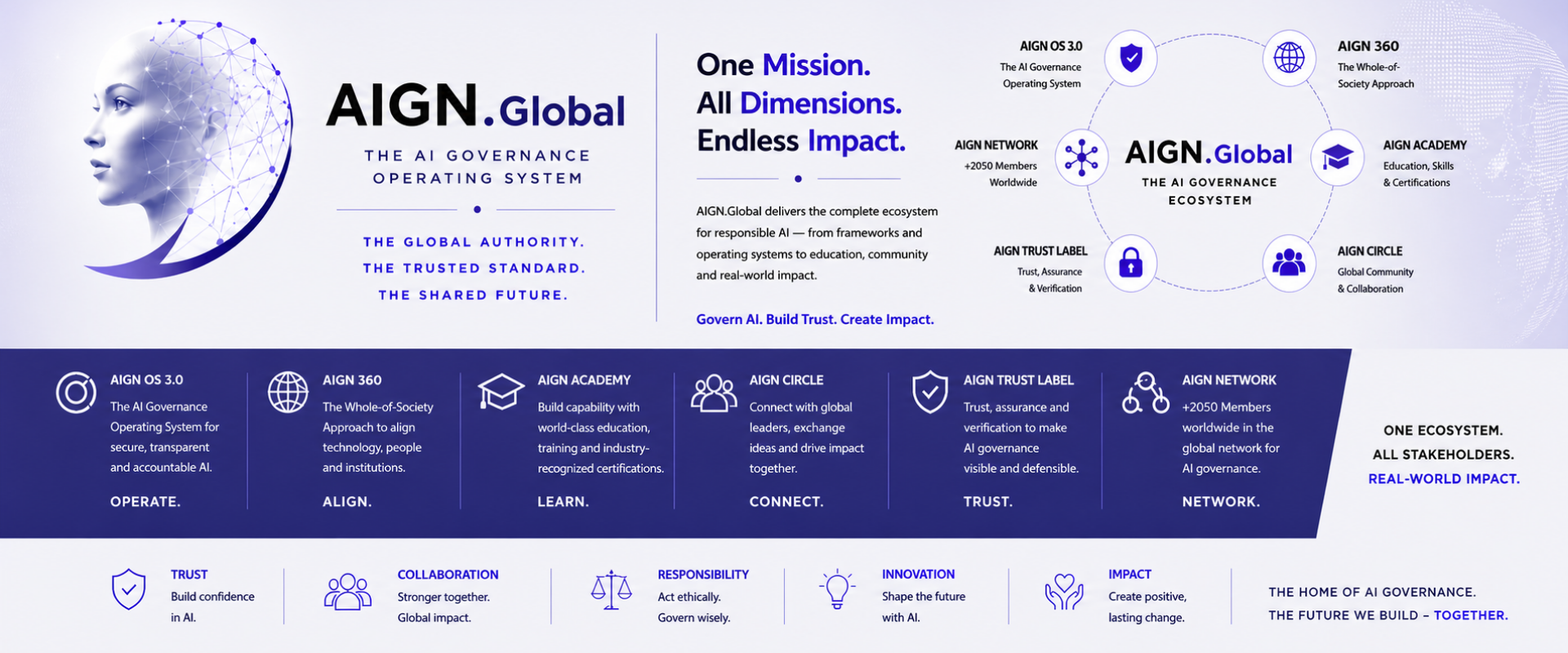

- Connection to the AIGN Global Network 2,500+ LinkedIn members, 17,500+ followers and a growing global AI Governance ecosystem.

Founding Cohort members keep this introductory rate as long as they remain active members. Future cohorts may be priced differently.

Test your AI Governance judgment

before the market, the board

or the regulator does.

Every application is read personally. If your profile fits, Patrick or the AIGN team will follow up personally.

Apply for AIGN Sparring

The Founding Cohort is Financial Services. Applications close when 10 qualified members are confirmed — first reviewed, first in.

To express interest, please send a short email with your role, sector and current AI Governance challenge.

Contact AIGN →Questions? message@now.digital