Make AI Governance

visible, evidence-based

and defensible.

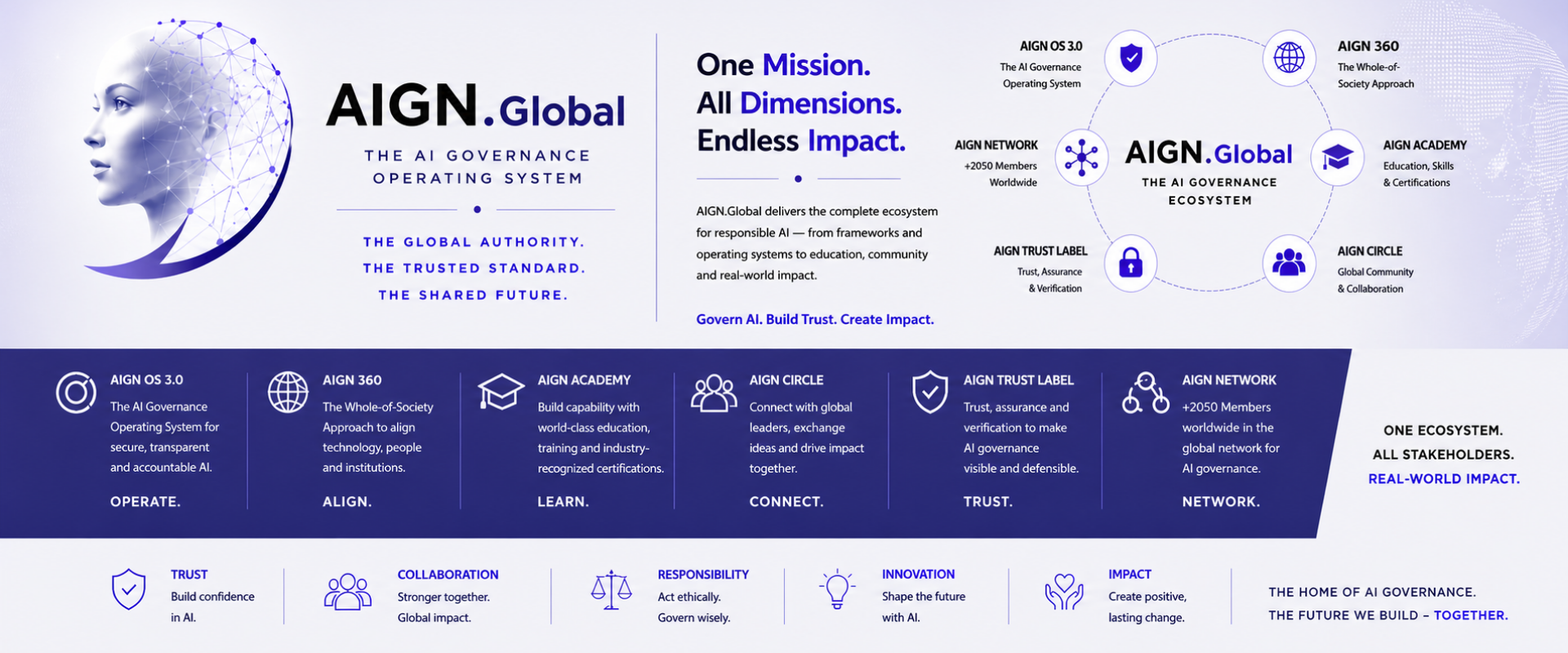

The AIGN Trust Label is built to become a visible trust signal for customers, partners, boards and audit-facing governance processes — aligned with EU AI Act, ISO/IEC 42001, GDPR, NIS2 and DORA.

What it isn’t: A legal certification, a regulatory approval, or a substitute for statutory audits.

Compliance is invisible. Trust must be shown.

Organisations invest heavily in AI governance — yet struggle to make their maturity visible to auditors, customers and boards. The AIGN Trust Label closes that gap with structured evidence.

Auditors expect evidence

Policies alone are not enough. Audit-facing processes increasingly require demonstrable, traceable governance — controls, decision records, accountability paths.

Customers ask the question

„How do you govern your AI?“ is becoming standard in RFPs, procurement and B2B contracts. Without structured evidence, deals slow down.

Boards need defensibility

Under EU AI Act and NIS2, leadership carries personal accountability. Documented diligence becomes a strategic protection.

Eight structured governance dimensions.

The AIGN Trust Label assessment is based on the AIGN OS reference architecture and reviews structured evidence across eight dimensions of AI governance maturity.

AI System Inventory & Classification

Documented inventory of AI systems, risk classification, and scope of governance application across the organisation.

Governance Roles & Accountability

Defined roles, RACI assignment, AI governance bodies, escalation paths and reporting lines to leadership.

Risk & Impact Assessment Process

Structured AI risk assessments including fundamental rights impact, where applicable under EU AI Act.

Human Oversight & Escalation

Documented human-in-the-loop mechanisms, override pathways and escalation procedures for AI decisions.

Documentation & Decision Logs

Traceable model documentation, design decisions, training data records and audit-relevant logs.

Vendor & Third-Party AI Governance

Governance of external AI providers, foundation model usage, and contractual safeguards along the supply chain.

Incident, Monitoring & Change Management

Continuous monitoring, drift detection, incident response procedures and managed change for AI systems.

Board Reporting & Defensibility

Reporting cadence to leadership, documented diligence and evidence packages for audit-facing processes.

Three labels. One trusted methodology.

Each label is awarded after a structured assessment based on the AIGN OS reference architecture. Tiers reflect scope and target context — not quality of governance.

AIGN Trust Label · Certified

The flagship governance signal. Confirms documented operating model, control structure and accountability across an organisation’s AI systems.

- Full AIGN OS-based assessment (8 dimensions)

- 25+ governance criteria reviewed

- Regulatory mapping (EU AI Act, GDPR, ISO 42001)

- Public verification page · Digital badge & seal

- 12-month validity · Documented assessment record

AIGN Trust Label · Excellence

Advanced tier for organisations operating high-risk AI under EU AI Act, NIS2, DORA. Adds deep technical validation, third-party governance and board defensibility evidence.

- Extended assessment · 50+ criteria

- AI Risk & Fundamental Rights Assessment

- Model inventory · Lifecycle & bias review

- Third-party / vendor governance review

- Board-level defensibility report

- Surveillance reviews · Documented assessment record

AIGN Trust Label · Education

Dedicated trust label for educational institutions. Recognises structured, responsible and transparent use of AI in learning environments — including governance, student protection and institutional oversight.

- AI usage & ethics framework for education

- Student protection & responsible AI policies

- Teacher & staff AI governance enablement

- Institutional oversight & accountability

- Public trust signal for parents, partners, authorities

- Adapted AIGN OS assessment for education

A world first in AI Governance for Education.

In September 2025, Fayston Preparatory School in Seoul, South Korea became the first institution in Asia to receive the AIGN Education Trust Label — and the first school worldwide to be recognised under the AIGN governance framework for education.

This milestone proves that AI governance is no longer abstract — it is teachable, certifiable, and operational.

to receive the label

independently assessed

real-world pilot

What the label actually delivers.

Beyond a logo: practical business outcomes from a structured, evidence-based governance signal.

Audit-facing evidence

Structured documentation, controls and decision records ready for internal audit, customer reviews and supervisory inquiries.

Sales acceleration

Reduces repetitive governance evidence requests, shortens procurement discussions and supports faster trust-building in regulated B2B sales cycles.

Board defensibility

Documented external assessment provides leadership with evidence of due diligence under EU AI Act, NIS2 and sector rules.

Customer & stakeholder trust

Public verification page, digital badge and seal — usable on website, contracts, ESG reporting and investor communication.

EU AI Act penalties may reach up to €35M or 7% of global annual turnover for the most severe violations.

Documented governance, risk assessment and diligence evidence have become commercially relevant — both for regulatory exposure and for customer-facing procurement, partner due diligence and board accountability.

From assessment to visible trust in 8–12 weeks.

A structured five-step assessment process based on the AIGN OS reference architecture and documented assessment criteria.

Scope

Define AI use cases, systems, vendors, regulatory exposure and assessment boundary.

Assess

AIGN OS-based maturity assessment. Document review, interviews, control mapping.

Validate

Evidence review, gap analysis, technical validation of controls and accountability structures.

Award

Assessment decision based on documented criteria. Issuance of label, public listing, digital seal.

Sustain

Surveillance reviews, regulatory updates, reassessment after 12 months.

Four labels. Individual scoping.

Pricing depends on scope, number of AI systems, regulatory exposure and assessment depth. Each engagement starts with a structured scoping conversation and an individual proposal.

AIGN Trust Readiness

For SMEs and first governance visibility

- AIGN OS Light Assessment

- Initial AI use case review

- Foundational governance criteria

- Digital readiness badge + verification page

- 12-month validity

- Self-service portal access

AIGN Trust Label · Certified

For enterprises and public sector

- Full 8-dimension AIGN OS Assessment

- Multi-use-case review

- 25+ governance criteria

- Regulatory mapping (AI Act, GDPR, ISO 42001)

- Board-ready assessment report

- Public listing & digital seal

- Surveillance reviews

- Priority advisory access

AIGN Trust Label · Excellence

For regulated sectors and critical infrastructure

- Extended assessment · 50+ criteria

- Enterprise-wide AI use case scope

- AI Risk & Fundamental Rights Assessment

- Model inventory & lifecycle review

- Third-party / vendor governance review

- Sector mapping (NIS2, DORA, sector rules)

- Board defensibility report

- Quarterly surveillance reviews

- Dedicated AIGN governance lead

AIGN Trust Label · Education

For schools, universities and academies

- Adapted AIGN OS assessment for education

- AI usage & ethics framework

- Student protection & responsible AI policies

- Teacher & staff governance enablement

- Institutional oversight structures

- Public trust signal · Digital badge & seal

- Aligned with OECD, UNESCO, NIST, ISO 42001

Every engagement begins with a scoping conversation.

We assess your AI portfolio, regulatory exposure and target audience — then deliver an individual assessment proposal with defined scope, timeline and investment.

Multi-year and group engagements available · Education tier first awarded September 2025 · Seoul, KR

AIGN Trust Label is not a legal certification.

It is an evidence-based governance signal that helps organisations make AI governance visible, reviewable and defensible.

Move from policy

to provable trust.

Every engagement begins with a structured scoping conversation. We assess your AI portfolio, regulatory exposure and target audience — then deliver an individual proposal with defined scope, timeline and investment.