Purpose. Benefits. Boundaries.

The world’s first certifiable AI Governance Operating System — turning regulation into architecture, compliance into capability, and principles into infrastructure.

AI governance has evolved beyond ethics and guidelines. It is becoming a measurable system — the missing infrastructure of the intelligent age.

AIGN OS defines that system.

It translates global regulation (EU AI Act, Data Act, ISO/IEC 42001, NIST AI RMF, OECD Principles) into operational, certifiable governance architecture — measurable through the ASGR – AIGN Systemic Governance Readiness Index.

This page explains what AIGN OS truly is, why it matters, who it’s built for — and what it deliberately is not.

What Is AIGN OS?

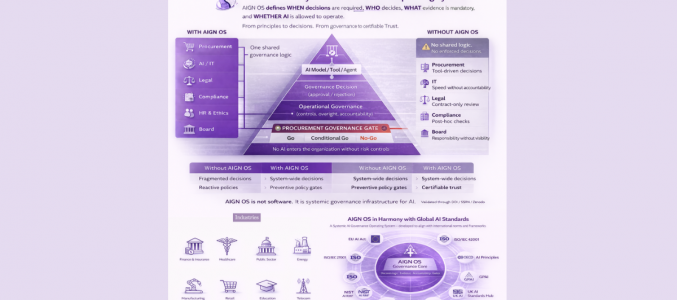

AIGN OS is not a software product. It is an operational system for governing intelligent technologies.

Designed like a digital operating system, AIGN OS structures and certifies how AI and data are governed — across teams, tools, standards, and geographies. It combines regulatory logic, ethical governance, and real-time decision support into one scalable infrastructure.

It’s a new category:

→ Not just a framework

→ Not just a checklist

→ Not another platform

→ But an operational AI Governance OS

Why AIGN OS Changes the Game

Traditional governance tools manage policies — AIGN OS builds systems.

Where most frameworks end with documentation, AIGN OS begins with design: it embeds legality, accountability, and trust directly into the architecture of intelligent systems.

By connecting law, organization, and technology in one certifiable structure, AIGN OS transforms governance from interpretation into infrastructure.

It operationalizes what global regulation demands — and what institutions have so far lacked: a verifiable system of control that works across borders, sectors, and technologies.

At its core, AIGN OS integrates three dimensions:

- The AIGN Legal Layer — turning statutory obligations (EU AI Act, GDPR, ISO 42001, NIST AI RMF) into actionable governance control points.

- The ASGR Index (AIGN Systemic Governance Readiness) — measuring systemic readiness and maturity across organizations and jurisdictions.

- The Trust Infrastructure — providing certification, auditability, and public visibility through Trust Labels and verification records.

Together, these components create the world’s first Legal-to-Architecture Continuum™ — a closed compliance loop where obligations become design, governance becomes measurable, and trust becomes certifiable.

Unlike static checklists or fragmented toolkits, AIGN OS provides a scalable, certifiable, and updateable governance backbone —

the missing system layer between regulation, implementation, and oversight.

What Does AIGN OS Do?

AIGN OS provides a complete, certifiable system for governing artificial intelligence and data — across laws, sectors, and technologies.

It doesn’t interpret compliance; it builds it.

Like a digital operating system, AIGN OS connects legal duties, organizational roles, and technical controls into one systemic governance architecture.

Every layer, framework, and toolkit is designed to make responsible AI measurable, auditable, and certifiable.

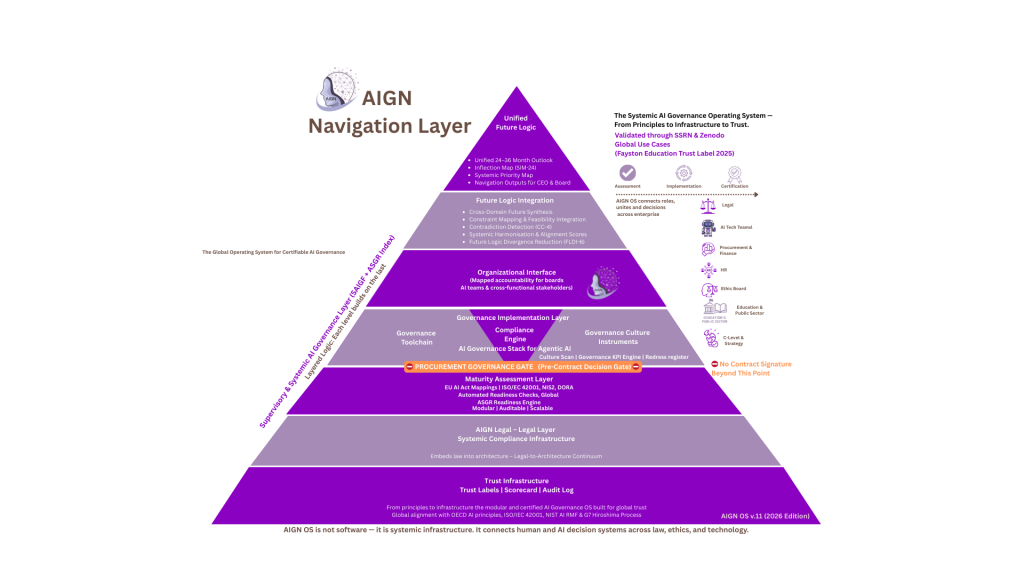

Through its 8-Layer Architecture, AIGN OS delivers:

- Trust Infrastructure – the verifiable foundation: trust labels, audit logs, certification registries, and assurance dashboards.

- AIGN Legal Layer – the systemic compliance infrastructure that transforms regulatory text (EU AI Act, GDPR, ISO 42001, NIST AI RMF) into operational control points.

- Maturity Assessment (ASGR Index) – the readiness engine benchmarking legal, ethical, and organizational maturity across regimes and institutions.

- Governance Implementation Layer – the operational core: checklists, workflows, and toolchains that translate regulation into daily practice.

- Organizational Interface – mapping accountability between boards, executives, legal, and technical teams.

- Framework Modules – seven modular frameworks for global, education, SME, agentic AI, culture, data, and supervisory governance.

- Trust Kernel – the normative foundation defining human-centric, transparent, and accountable AI by design.

- Supervisory AI Governance (SAIGF) – the oversight layer ensuring fiduciary compliance, maturity certificates, and annual governance statements.

Together, these layers create a unified Operating System for Responsible AI Governance — a structure that turns fragmented regulations and frameworks into one certifiable continuum of control.

Through this system, AIGN OS enables any organization to:

- Govern AI and data across their full lifecycle

- Embed legal compliance directly into technical and organizational design

- Measure readiness and maturity with the ASGR Index

- Demonstrate verifiable trust through AIGN Trust Labels and Certifications

- Build systemic credibility with regulators, investors, and citizens

AIGN OS doesn’t replace your tools — it governs how they work together.

It integrates with existing environments like Microsoft 365, Power BI, or Notion — making governance systemic, not bureaucratic.

Who Is AIGN OS For

AIGN OS was built for institutions that must turn AI compliance into AI capability — across public, private, and educational sectors.

It enables leadership teams, regulators, and educators to govern intelligent systems with measurable trust, legal certainty, and systemic consistency.

| Audience | Governance Need | AIGN OS Value |

|---|---|---|

| Governments & Regulators | Build national AI governance infrastructures; align ministries and agencies; benchmark readiness internationally. | Legal-to-Architecture Continuum™ for national compliance, ASGR benchmarking, and citizen trust through visible certifications. |

| Corporations & Enterprises | Ensure compliance across EU AI Act, ISO/IEC 42001, and global regimes; defend fiduciary and ESG duties. | End-to-end governance OS with board-level oversight (SAIGF), audit readiness, and systemic risk control. |

| Supervisory Boards & Directors | Fulfill fiduciary oversight obligations under Caremark and ARAG/Garmenbeck; demonstrate accountability to investors and regulators. | Supervisory AI Governance Framework™ (SAIGF) — codified oversight, maturity certification, and Annual AI Governance Statement. |

| Startups & SMEs | Navigate complex compliance with limited resources; gain early market credibility. | Lightweight entry pathway with AI Governance Readiness Check, SME Governance Framework, and scalable trust labels. |

| Education Systems & Universities | Manage AI use in classrooms and research; align with UNESCO, COPPA/FERPA, and ministry guidelines. | Education Framework + AIGN Education Trust Label — certifying safe, ethical, and transparent AI adoption. |

| Healthcare, Finance & Critical Infrastructure | Comply with high-risk AI classification, cross-sector regulation, and audit trails. | Domain-aligned frameworks, ISO/NIST interoperability, and automated readiness benchmarking. |

| Civil Society & Research Institutions | Demand transparency, inclusion, and accountability in public and private AI use. | Governance transparency tools, impact metrics, and access to certified AI Governance indicators. |

In Essence

AIGN OS connects everyone responsible for the safe and lawful use of AI —

from the lawmakers who define the rules, to the boards who oversee them, to the engineers and educators who bring them to life.

It transforms fragmented accountability into one continuous governance system —

auditable by regulators, trusted by citizens, and certifiable across borders.

Why an „Operating System“?

Because governance is no longer a policy — it’s an operating system.

Most organisations still treat AI governance as a checklist or a report.

But intelligent systems operate continuously, adaptively, and across borders — and so must governance.

AIGN OS replaces static control with systemic architecture, ensuring that every rule, role, and responsibility is connected, traceable, and certifiable.

Like a digital operating system coordinates programs and users,

AIGN OS coordinates governance across laws, institutions, and technologies:

| Core Element | Purpose | Outcome |

|---|---|---|

| AIGN Legal Layer | Translates legal obligations into actionable control points. | Law becomes architecture — measurable, auditable, and certifiable. |

| ASGR Index | Measures systemic readiness and maturity. | Creates global comparability and evidence-based governance progress. |

| Trust Infrastructure | Embeds audit trails, certifications, and labels into every governance process. | Enables verifiable trust across sectors and jurisdictions. |

| Supervisory AI Governance Framework (SAIGF) | Integrates fiduciary oversight and board accountability. | Connects corporate governance with regulatory assurance. |

Together, these elements make AIGN OS the first governance system that runs like technology itself —

modular, scalable, and interoperable across all sectors and standards.

It is not software.

It is the architecture that defines how responsible AI is governed — legally, operationally, and ethically.

That is why AIGN OS is called an Operating System for Responsible AI Governance.stems.

What AIGN OS Is Not

AIGN OS defines a new category:

It is not another framework, not a software platform, and not a consultancy method.

It is the world’s first Systemic Governance Operating System — a certifiable, interoperable, and IP-protected architecture that turns global regulation into measurable design.

| ❌ Not This | ✅ But This |

|---|---|

| A software tool or SaaS product | A certifiable governance infrastructure — independent of any vendor or platform |

| A static framework or checklist | A dynamic, layered architecture that evolves with regulation |

| A consulting toolkit | A scientifically published, DOI-registered operating system with standardised governance logic |

| A legal guideline | A compliance-to-infrastructure mapping — turning law into architecture |

| A set of principles | A measurable system with readiness metrics, controls, and certification layers |

| A theoretical concept | A proven governance backbone, piloted across governments, enterprises, and education systems |

| A vendor lock-in | A modular, open, interoperable design built for global adoption |

| A one-time audit | A living, certifiable governance infrastructure that enables continuous assurance |

In Essence

AIGN OS doesn’t replace tools — it governs how they work together.

It complements your existing environments (Microsoft 365, Power BI, Notion, SAP, or local data systems) and makes governance systemic, not bureaucratic.

This distinction matters because AI governance is no longer an add-on — it’s an infrastructure layer of the intelligent age.

That is why AIGN OS was designed as the Operating System for Responsible AI Governance —

legally grounded, operationally scalable, and globally certifiable.

What Problem Does AIGN OS Solve?

Artificial Intelligence is advancing faster than the systems designed to govern it.

Across sectors and regions, one reality has become evident: regulation alone doesn’t create governance.

Organisations, governments, and boards face a growing governance gap between legal intent and operational reality:

- AI laws multiply — but compliance remains fragmented.

- Ethical guidelines exist — but accountability is unclear.

- Audits are demanded — but systemic evidence is missing.

- Trust is expected — but rarely measurable.

This is where AIGN OS intervenes:

It replaces reactive, fragmented compliance efforts with a systemic, certifiable governance architecture that connects laws, institutions, and infrastructures.

From Fragmentation to Systemic Control

| The Challenge | What Happens Today | AIGN OS Solution |

|---|---|---|

| Regulatory Fragmentation | Organisations face overlapping duties (EU AI Act, ISO/IEC 42001, GDPR, NIST AI RMF) without a unified structure. | AIGN Legal Layer integrates all regimes into one Legal-to-Architecture Continuum™. |

| Operational Gaps | Governance lives in silos — legal, IT, ethics, and risk management don’t share a single architecture. | Governance Implementation Layeroperationalises shared procedures, roles, and decision rights. |

| Lack of Measurable Readiness | Most entities cannot quantify their AI governance maturity or prove it externally. | ASGR Index provides a global benchmark — comparable across industries and jurisdictions. |

| Board-Level Accountability | Oversight obligations (Caremark, ARAG/Garmenbeck) lack concrete governance tools. | SAIGF Framework embeds fiduciary oversight and measurable AI risk reporting at board level. |

| Erosion of Public Trust | Citizens, investors, and regulators cannot see how AI decisions are governed. | Trust Infrastructure introduces verifiable labels, certificates, and audit records visible to all stakeholders. |

Systemic Impact

By uniting law, ethics, and engineering, AIGN OS creates a continuous governance loop —

from legal intent → to operational control → to visible, certifiable trust.

It transforms governance from:

- Interpretation → Architecture

- Principles → Proof

- Compliance → Capability

This systemic model enables governments, enterprises, and institutions worldwide to govern AI as infrastructure —

reliably, transparently, and sustainably.

In Summary

The problem: AI governance today is fragmented, reactive, and unverifiable.

The solution: AIGN OS — the world’s first Operating System for Responsible AI Governance — turns laws into architecture and trust into measurable infrastructure.

It bridges the gap between legal mandates and real-world action — and gives organizations a path to certify their responsibility.

What Makes It Unique?

In a world flooded with checklists, toolkits, and ethics principles, AIGN OS stands apart as a systemic governance infrastructure —

the first globally certifiable Operating System for Responsible AI Governance.Where others interpret the law, AIGN OS architects it.

Where others measure risk, AIGN OS measures governance maturity.

Where others offer tools, AIGN OS offers structure — connecting all roles, rules, and responsibilities through one certifiable architecture.

AIGN OS vs. Conventional Governance Approaches

Feature AIGN OS Frameworks & Guidelines SaaS / Compliance Tools Scientific Legitimacy DOI-registered, peer-recognised as part of the scientific record (SSRN / Zenodo) Policy-level or soft-law; rarely standardised Proprietary, non-scientific Legal Integration AIGN Legal Layer maps EU AI Act, GDPR, ISO 42001, NIST RMF, OECD Principles Advisory crosswalks only Limited to checklists or templates Certifiability Fully certifiable architecture with Trust Labels & SAIGF Maturity Certificates Non-certifiable frameworks Vendor-controlled attestations Systemic Readiness Measurement ASGR Index – quantifies governance maturity across 7 dimensions Often qualitative or self-declared No cross-regime comparability Board-Level Oversight SAIGF Framework for fiduciary accountability Absent Not applicable Trust Infrastructure Built-in audit trails, labels, verification registry No visible assurance layer Closed system, opaque to public Interoperability API-ready, tool-agnostic, globally aligned Static and jurisdiction-bound Locked to vendor architecture Intellectual Property Status IP-protected governance OS with enforceable authorship rights Open or public-domain principles Proprietary, no scientific protection Scalability Deployable across sectors and resource levels Requires adaptation Limited by license or stack Outcome Compliance becomes measurable, trust becomes visible Compliance remains declarative Compliance remains siloed

In Essence

AIGN OS is not another framework — it’s the infrastructure that makes frameworks work.

It brings together the legal foundation, operational logic, and trust mechanisms needed to make responsible AI measurable and certifiable worldwide.By design, it transforms:

- Law → Architecture

- Governance → Infrastructure

- Trust → Evidence

That is what makes AIGN OS the Operating System for Responsible AI Governance —

a living standard for governments, enterprises, and educators in the age of intelligent systems.

Get Started

AI governance is no longer a concept — it’s an operating system.

The question is not whether you need governance, but how systemically it is embedded into your organisation, ministry, or institution.

AIGN OS provides that system — measurable, certifiable, and globally recognised.

How to Begin

| Step | Action | Outcome |

|---|---|---|

| 1. Governance Orientation | Join an AI Governance Briefing – a 60-minute introduction to systemic governance logic. | Understand the 8-Layer Architecture and identify your governance position. |

| 2. Readiness Assessment | Conduct the AIGN OS Readiness Check – the world’s DOI-registered compliance baseline. | Receive your Readiness Score, heatmap, and quick wins within 48h. |

| 3. Framework Alignment | Map your structure against the AIGN Legal Layer and ASGR Index benchmarks. | Achieve cross-regime alignment and quantify systemic maturity. |

| 4. Trust Certification | Apply for your AIGN Trust Label or SAIGF Maturity Certificate. | Gain verifiable recognition for responsible AI governance. |

| 5. Continuous Governance | Join the AIGN Partner Network – governments, schools, and enterprises advancing global trust infrastructure. | Maintain continuous assurance and readiness across AI, data, and risk. |

Why Start Now

With AIGN OS, governance becomes infrastructure — measurable, certifiable, and sustainable.AIGN OS is how the world turns AI governance from policy into infrastructure.

It’s the system behind responsible, trusted, and certifiable AI.

The EU AI Act is in force — and compliance will soon define competitiveness.

ISO/IEC 42001 and NIST AI RMF are becoming the global baseline for operational AI management.

Investors, regulators, and citizens are demanding visible trust, not policy declarations.

Legal Notice on Intellectual Property

All content, structures, frameworks, terminologies, and the layered governance architecture of AIGN OS – The AI Governance Operating System are the original intellectual creation of Patrick Upmann. AIGN OS and all its components – including but not limited to frameworks, toolchains, trust labels, indices, licensing models, and governance architectures – are protected under international copyright, trademark, and intellectual property law.

The AIGN OS concept and system have been officially published DOI-registered research paper as citable scientific work, thereby establishing prior art, scientific recognition, and enforceable authorship protection.Any unauthorized reproduction, adaptation, distribution, modification, or commercial/public use of AIGN OS – in whole or in part – without the express written consent of the rights holder is strictly prohibited and will result in legal enforcement under applicable international IP law.

© Patrick Upmann – All rights reserved.